New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

[dev.icinga.com #8137] Maximum concurrent service checks #2468

Comments

|

Updated by mfriedrich on 2015-01-07 14:30:58 +00:00

"max_concurrent_checks" is an Icinga 1.x configuration setting which has not been ported to Icinga 2. I am not entirely sure if that really solves the issue since the skipped checks will fill yet another check queue happening in the future. Monitoring the instances and their health state sounds more reasonable to me, especially when there are resource upgrades needed on-demand. |

|

Updated by JayNewman on 2015-01-08 23:59:50 +00:00 The issue I am trying to convey is, that it is better to end up with a large backlog of service checks and have time to correct the configuration, rather than have an insane number of concurrent checks running which will crash the server (I measured a load level over 500 at times) and also floods the DNS servers which are being queried with each service check. The latter problem means that not only do we have a problem with the Icinga monitoring, but we have also seriously impacted a production environment in which core services rely on DNS. We do not want the risk of a monitoring tool being the cause of a production outage; it is supposed to help us avoid outages. |

|

Updated by mfriedrich on 2016-02-25 00:29:48 +00:00

|

|

Updated by mfriedrich on 2016-03-04 15:50:06 +00:00

|

|

Updated by mfriedrich on 2016-03-31 10:35:03 +00:00

|

|

Updated by kowalskimn on 2016-04-01 07:56:36 +00:00 Same problem here. I cut down my instance from 6gb ram to 4gb and icinga started crashing because some checks are more ram hungry than others and there were too many of them running at the same time. I had to scale it back up to 6gb to stop the crashes from happening. This caused the oom to crash icinga2 itself, since it was still using more ram than each of the check processes. If i will add more hosts to the setup, this will just not scale, unless i spread checking into a few separate machines - which is pretty much the same solution as adding more ram to one. Setting max concurrent checks sounds like a good idea, more sophisticated solutions like making icinga2 able to adjust amount of checks based on ram/cpu utilization sound rather overly complex. |

|

Updated by mfriedrich on 2016-04-18 08:36:37 +00:00

|

|

Updated by gbeutner on 2016-05-10 09:26:11 +00:00

|

|

Updated by gbeutner on 2016-05-10 09:30:04 +00:00

Applied in changeset f6f3bd1. |

|

Updated by mfriedrich on 2016-05-10 10:25:26 +00:00

Tests |

|

Updated by mfriedrich on 2016-05-11 07:40:47 +00:00

|

|

Updated by mfriedrich on 2016-05-11 12:40:49 +00:00

|

|

Updated by gbeutner on 2016-05-18 12:02:40 +00:00

|

This issue has been migrated from Redmine: https://dev.icinga.com/issues/8137

Created by JayNewman on 2014-12-20 18:11:34 +00:00

Assignee: gbeutner

Status: Resolved (closed on 2016-05-10 09:30:03 +00:00)

Target Version: 2.4.8

Last Update: 2016-05-11 07:40:46 +00:00 (in Redmine)

From older examples, it appears that at one point in time it was possible to specify "max_concurrent_checks", but apparently this is no longer the case.

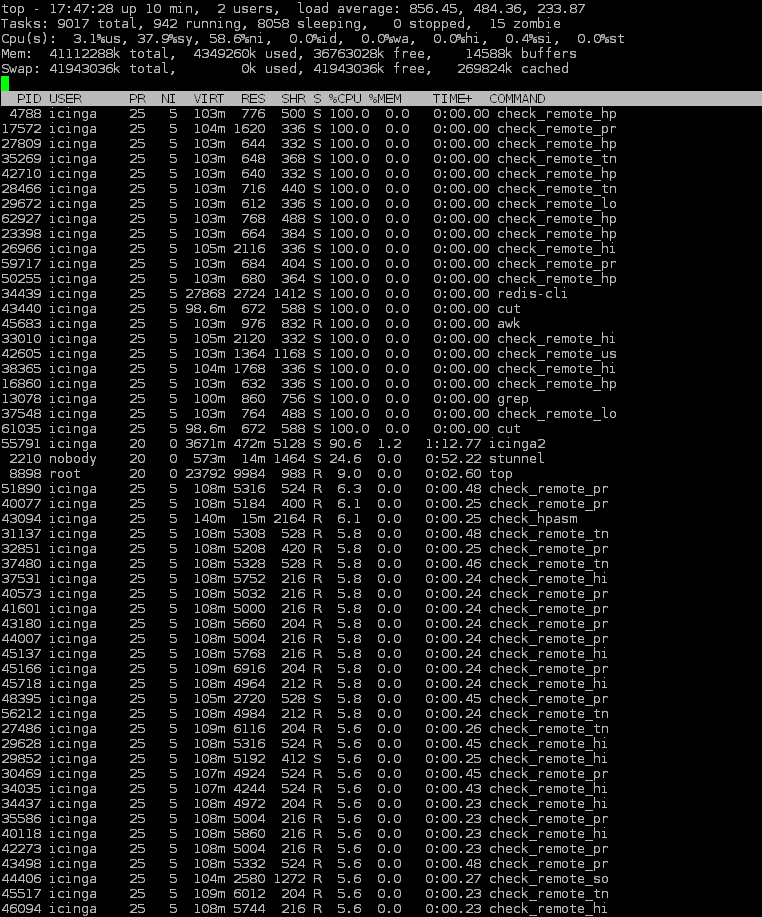

My environment had a problem where the check_interval was set too small on some service checks, and the satellite nodes were each running over 9,000 processes trying to keep up. This not only caused instability but also flooded the DNS server.

Can you please implement some form of limitation on concurrent checks, to act as a safety net? An error in configuration should ideally cause a backlog rather than overwhelming the infrastructure.

If you need more details, I discussed and demonstrated this with Cornelius Wachinger.

Attachments

Changesets

2016-05-10 09:26:55 +00:00 by gbeutner f6f3bd1

2016-05-12 09:08:21 +00:00 by gbeutner f08d378

2016-05-12 11:47:32 +00:00 by gbeutner 97a5091

2016-05-12 12:06:47 +00:00 by gbeutner 01e58b4

Relations:

The text was updated successfully, but these errors were encountered: